Hyperspectral satellite imagery for Earth observation

What is hyperspectral imagery?

Until recently, imaging satellites used cameras that capture the Earth in a few frequency bands. Multispectral sources typically cover the optical red, green, and blue bands of visible light in addition to a few other frequency ranges.

But a revolution is underway. A growing number of satellites equipped with hyperspectral cameras are going to allow us to observe the Earth in extraordinary detail across a large frequency range — well beyond what the human eye can see, and in much more detail than existing multispectral sensors such as Landsat or Sentinel-2.

Hyperspectral imagery enables applications such as tree and plant species identification, monitoring of air quality (particulate and gaseous) and water quality (e.g. algal bloom detection), tree health observation, and more.

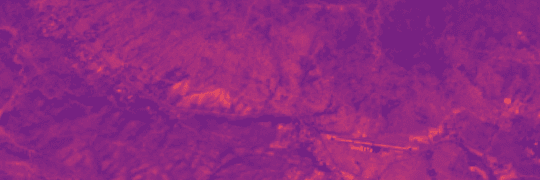

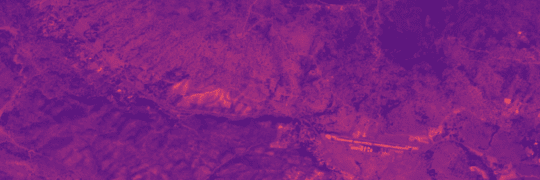

Compare visible light to multi- and hyperspectral images

Visible light

3 spectral bandsA RGB image can be used to identify vegetation by shape and color

Multispectral

5-35 spectral bandsA 13-band Sentinel-2 image can be used to differentiate between some crops and detect plants under stress.

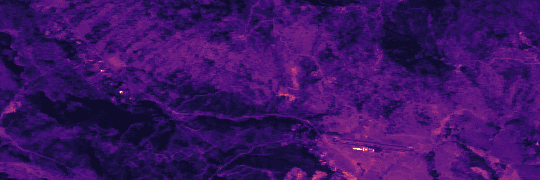

Hyperspectral

100s of spectral bandsThe 224-band AVIRIS sensor enables detection of plant chemical composition and physiology changes attributed to weeds, diseases, or nutrient deficiency.

The visualization above shows one spectral band at a time. The amount of data contained in a single image can also be represented as a hyperspectral cube.

Hyperspectral data cube

Different materials can often be identified by their characteristic spectral signature. Hyperspectral imaging sensors cover a larger frequency spectrum in narrow, continuous frequency bands. They enable the capture and identification of spectral profiles, such as of healthy (green-stage) and stressed (red-stage) conifers in California.

Explore the spectral profiles in an hyperspectral image

Move your mouse over the image to see the spectral profile in each pixel

AVIRIS images contain 224 datapoints in each pixel. We can compare them with reference spectral profiles known from material science. And tracking changes over time enables measurement of tree health, etc.

Manually comparing spectral profiles is inefficient at scale. Using machine learning we can train a model to identify the spectral characteristics of different materials. New imagery can then be classified more quickly and at scale.

Techniques for production of actual analyses are still in an early stage. Hyperspectral imagery is currently limited and ground truth and model training data is scarce.

The image below shows early results from our tree health study area in California. Here, we used hyperspectral imagery to distinguish between healthy (green-stage) conifers and conifers under stress (red-stage). These results are from an ML model that works on 224-band AVIRIS imagery.

See how a machine learning model can detect conifer health

Hyperspectral imageWhat the human eye can see

Element 84 R&D vegetation modelUsing machine learning on spectral profiles

These are early results shown for illustration only.

Hyperspectral at Element 84

NASA expects to see a major increase in the number of hyperspectral data sets in the coming years. Supported by an innovation research grant from NASA, Element 84 created a reusable, open source data processing pipeline for serving and analyzing hyperspectral imagery.

Our pipeline for hyperspectral imagery processing on the web

Work with us on your hyperspectral project

Element 84 is a woman-owned small business that works with public, private, and non-profit sector clients to develop geospatial data processing pipelines and build software.

Get in touch at element84.com/who-we-are/contact-us/

Hyperspectral Satellite Launch Tracker

See available HSI data and find out when more becomes available

| Launch date | Name | Provider | Platform | Constellation size now / planned | Wavelengths in nm | Spectral res.in nm | Spatial res.in m |

|---|---|---|---|---|---|---|---|

| N/A | AVIRIS (classic) | NASA/JPL | Airborne | 380-2510 | 10 | Varies | |

| N/A | AVIRIS-NG | NASA/JPL | Airborne | 380-2510 | 5 | Varies | |

| 2018 | DESIS | DLR | ISS | 400-1000 | 2.55 | 30 | |

| 2019 | PRISMA | ASI | Satellite | 400-2500 | 10 | 30 | |

| 2022 | EnMAP (VNIR) | DLR | Satellite | 420-1000 | 6.5 | 30 | |

| 2022 | EnMAP (SWIR) | DLR | Satellite | 900-2450 | 10 | 30 | |

| 2020-25 | Satellogic Aleph-1 | Satellogic | Satellite | 38 / 200+ | 460-830 | 14-35 | 25 |

| 2022-23 | PIXXEL | PIXXEL | Satellite | 6 | 5 | ||

| 2023-24 | Orbital Sidekick GHOSt | Orbital Sidekick | Satellite | 3 / 6 | 400-2500 | 3-5 | 8.3 |

| 2023 | Esper | Esper | Satellite | 18 | 400-2000 | 6 | 6 |

| 2023 | Carbon Mapper (Tanager) | Planet/JPL/CarbonMapper Coalition | Satellite | 2 | 5 | 30 | |

| 2023 | Wyvern | Wyvern Space | Satellite | 36 | <5 | ||

| 2023 | HySpec | HyspeqIQ | Satellite | 12 | 105 | 5 | |

| 2023 | HyperSat | HyperSat | Satellite | 6 | |||

| 2023 | Kuva Hyperfield-1 | Kuva Space | Satellite | 450-1000 | 25 | ||

| early 2024 | PACE | NASA | Satellite | 1 | 350-885 | 1.25 or 2.5 | |

| 2024 | PIXXEL | PIXXEL | Satellite | 30 | 400-2500 | 5 | |

| 2027/28 | SBG | NASA | Satellite | 10 | 30 | ||

| 2029 | CHIME (Sentinel 10) | ESA | Satellite | 400-2500 | 10 | ||

| Unknown | BlackSky | BlackSky | Satellite | Unknown |

Launch dates and specifications may be changed or delayed. Last updated Tue Feb 27 2024.